I recently read a post in a Facebook group dedicated to WordPress, written by a young blogger. Really, a blogger! Of course he has social media accounts, but he actually blogs. About writing for magazines, about going on TV… just awesome! And he asked what the experts in the Facebook group would advise him to improve on his blog.

Table of Contents

What to improve, experts?

The experts are about one percent, they may not even all be experts, but they write their opinions. Whatever, either way, he didn’t get many of those answers.

I often do such quick site analyses with recommendations, so I have developed a methodology that results in a basic overview of what’s not optimal on the site and what can be improved.

So I checked out his website and made a recommendation for him. (Of course, I’m not giving a specific url here, this is a procedure that can be applied to many unmaintained sites.)

Main points of the brief analysis

When I do a brief analysis of the state of the website, I focus on several levels:

- the technical conditions of the software,

- legal formalities,

- data profile,

- design and UX including responsiveness,

- SEO and findability with search engine,

- speed tests,

- subjective impression.

I’m aware that this view is superficial and lacks context, which I don’t know. It is, however, intended for website owners who need motivation or to show the way. For people who just don’t know and need a helping hand. Also, that’s why the subjective impression is there, it’s my opinion which is supposed to be a contribution and motivation for change. That’s what I’m trying to do.

Since these are quick analyses and insights, I only point out the things that are wrong, I don’t comment too much on what is right.

Technical condition

I use a browser add-on that shows me what software the site is using 99% of the time, and if it’s WordPress, the WordPress version and key plugins.

This site uses an outdated version of WordPress, and it’s quite significantly so – 2 and 1/4 years old. This is the first big problem. Dozens of bugs have been found and fixed, and they are only related to WordPress itself (not counting plugins). The template used is Rosemary version 1.6, which is nearly three years old. Only two updates have been released in the meantime, which means that the template is no longer actively maintained and is becoming a technical debt. A child template is not used, which is not a fault, but it is a shortcoming with regard to the possible further development of the template. It can be corrected.

The bigger problem I see is that the site doesn’t use https addresses in the right way and content with http and https is mixed. This means that

- an attacker can use the unsecured variant to try to gain access,

- there are duplicate addresses,

which Google doesn’t like and penalizes. To clarify after the disclaimer: Google does not explicitly give any penalty, but by allowing duplicates to exist, the evaluation signals are spread among all duplicate addresses. And that’s the bad part – the goal is to have related content concentrated at one url, not multiple. Thank you for your comment to Zdeněk Dvořák

The website is protected against spam by reCaptcha, but probably not protected by a security plugin or firewall.

Formal obligations

The website does not manage cookie consent. The site does not have a contact form or sell anything, so it could be defended why neither information on data processing nor terms and conditions can be found. In short, no information is processed and no business relationships are created that need to be modified.

If there was a contact form on the site, it would have an inactive switch that the interviewer agrees to the processing of personal data, there would have to be information on the site about who the data collected is shared with – e.g. hosting, Google, accountants, co-workers, etc. Also, the webmaster would need to be able to process a request to delete the data. In short, what the GDPR requires.

The operator is not listed on the website. As the website contains commission links and a call for cooperation and can be expected to be a media cooperation, the operator of the website should be mentioned. This is required by law, specifically the Civil Code.

Data profile

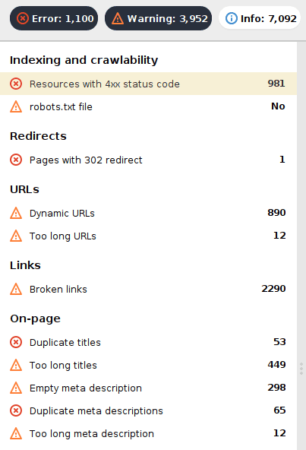

The data profile is my unfair advantage because I bought a license of SEO PowerSuite applications, and not everyone will do that, it’s clear to me. You could find out similar data in other ways, but these programs just do it fairly quickly and comprehensively.

In short, it is an analysis of all possible technical data and parameters that also relate to technical SEO and the overall technical condition and content of the website. The program finds

- invalid references,

- repetitive or missing titles,

- too big pages,

- pages with errors 500, 40x or pages with redirects ,

- and many other interesting metrics

They show them in statistics, tables and graphs. Then it is very easy to see what is wrong with the site. There are 536 „too big pages“ on this site, with the largest being 15 MB and the smallest 6 MB. However, these are all pages of the website! So clearly the priority should be to reduce the size of the data being transferred, one can assume that these are images. With these assumptions, the web will never reach ideal speeds – which Google judges from mobile data. There are 80 internal invalid links on the site which should be easy to fix, there are 30 invalid addresses linking from the website. Pages with overly long addresses, duplicates and other errors can be found.

Web control, user experience, responsiveness

I am not an expert on this, but I often come across errors and flaws that sticks out a mile. For example, missing navigation elements or non-functioning common elements. There are quite a lot of these things and it is necessary to communicate them with the owner. As an example:

- a huge logo or header image that takes up valuable space and hinders the ease of use of the site,

- the lack of breadcrumb trail (besides users, it is also very much appreciated by Google),

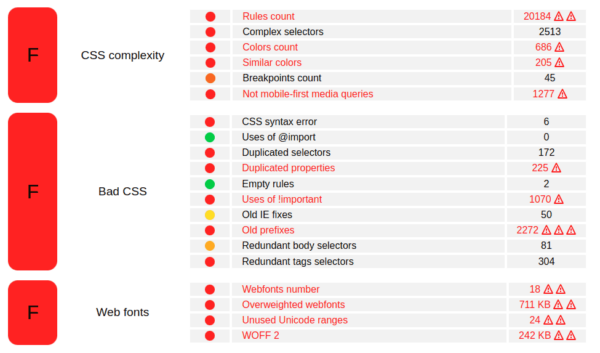

- inconsistent fonts, poorly chosen cuts, unnecessarily many fonts (loading the web slows down even more)

- absence of proven patterns and traditions (use of dark patterns) – logo is usually on the top left, Contact is the last item in the main menu, search on the right, key links in the footer, dark font on a light background, etc. – everything can be different, but it must be thought out, usually it is just inconsistency or lack of diligence when working on the site 🙂

Tip. For inappropriate design practices and elements, check out https://www.deceptive.design, it’s an interesting read.

Findability and SEO

When I look at a site and understand what it’s about, I search for a typed phrase in an anonymous window and wait to see if the site is on the first page. And if it doesn’t, I’m ready to go. If I can’t even figure out how to find the site, I’m ready to go right away. How are people supposed to find your site? What are they looking for? Are you offering them what they’re looking for?

The chances of being found increase if you take care of the website in all aspects. We’ve already mentioned the technical one, but it’s also about caring for the content. And the second important thing – update existing content and add new content.

Each page should have a heading structure along these lines:

- the first is h1, which is the page title or main heading,

- followed by h2,

- h3 can be below it,

- h4 can be below it and beyond that it usually has no benefit,

- the order of the headings must not be reversed, so they must not be h1-h3-h2-

Also, there is a custom mark for lists, no minus or asterisk. Images should have an alternate description (not visible, but in the code) and a caption that is visible and describes what is in the image. This is the bare minimum that every page needs to have. Only then can you build on this and enhance the content of the page with structured data for Google or social networks.

If you get that technical side right, you can put your energy into marketing. It’s ineffective to promote a site with technical flaws with advertising.

Often you really just need to Google the phrases you think your readers are searching for. The search engine will give you similar phrases, which is valuable stuff. If it doesn’t offer them, you’re off and people are looking for something else. If it does offer them, you have a topic for an article. Write it as well as you can and use the phrases from related searches as h2 headings in the article.

Power and speed

It really depends on how fast the site is. The problem is that people perceive speed differently than Google has set it. Because Google is the authority that sets the direction and sets the rules. It has created many tools and methodologies for this purpose, and a currently popular tool is PageSpeed Insights and under its wing – Lighthouse. It can also be run from a browser like Chrome.

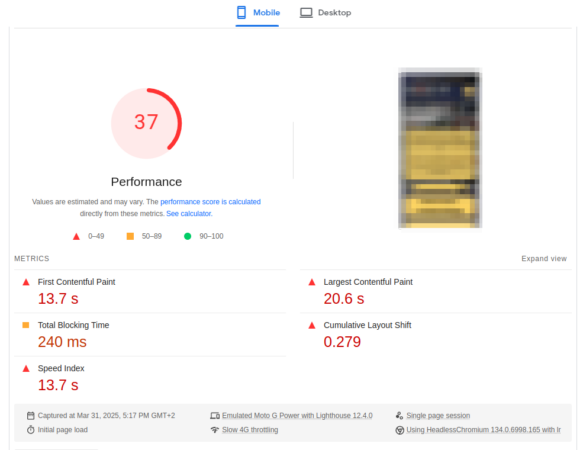

It analyses the site from the perspective of four dimensions – performance, accessibility, SEO and best practices (good solutions or methodologies). The collected data is then also presented to PSI using core web vitals metrics. The bottom line: neither tool measures speed alone; it is only one source of data and indicator of results. Therefore, the red number in the resulting image does not show page load speed.

The closest to this value, i.e. „how fast is my page„, is probably the time to interact, i.e. the time it takes for the page to load and become operational before responding to user input (e.g. scrolling). The lower the better.

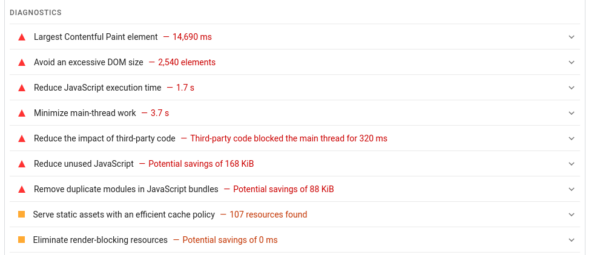

As of 2018, the data measured by a mobile device is taken as primary, which is very strict because the performance of mobile devices is lower than desktop computers. This brings us to the interpretation of the results in the figure.

The page (=specific url) meets Google’s requirements for 30 points out of 100 and meets only one of the indicators – Cumulative layout shift (CLS). Thus, there is no shift or breakdown of the page design. This is fine. But everywhere else, the page does not meet the required parameters:

- First contentful paint (FCP) – the first display of content comes after more than four seconds,

- Largest contenful paint (LCP) – how quickly the largest element in the design (image, block, photo gallery, etc.) is displayed,

- Total blocking time (TBT) – for almost half a second the page loading stops and waits for the scripts to be processed.

Based on the measurements, it can be inferred, as the results in the figure show, that it takes

- more than four seconds before anything is displayed at all,

- almost seven seconds (4.5+2.2) before the page is ready to accept user requests,

- the largest element in the design takes almost thirteen seconds to load.

Based on this information, the site developer should determine what is causing the high values and suggest improvements. Here we can assume that the cause may be one of the following situations (and let’s face it, they are repeated on every other site):

- cheap and slow hosting that can’t handle the complex design in Elementor,

- too many images on the page,

- too large images (both size and data intensive),

- too much external data (e.g. downloading photos from Instagram),

- low quality of the responsive version, which is not actually mobile-friendly enough and loads a lot of data unnecessarily,

- poorly written template, which does not take into account the requirements of Google, which we write about here (e.g. has too complex code – DOM),

- poor page design and a non–professional approach to content creation (huge thumbnail images)

- no optimization of the resulting code (no caching tools and methodologies are used).

What to do to make it better

The positive news is that most of this can be modified and improved. I guess the less positive news is that you either have to learn it and improve over time, or pay for the work of an expert. Thus, it’s not free and it won’t happen by itself. At this point, most site owners leave the situation unchanged and therefore their sites never become successful and they instead focus on promoting the site on social media because they find it more effective. But it’s endless Sisyphean task, and on top of that, they’re devoting their energy not to their site, but to the global corporation they’re sending advertising money to.

Try to improve your blog and then promote it, the results will be better and your energy will be more effective.